Have you ever wondered what actually happens when you hit “Save” on an app, or how Google can find your search results in milliseconds? The magic lies in how databases store and retrieve data. In this blog, we’ll walk through the core ideas behind database internals — explained simply, with everyday analogies. No prior tech knowledge needed!

1. Data Structures That Power Your Database

Think of a database like a massive library. You can dump all the books on the floor (store data), but finding a specific book later would take forever. That’s why libraries use organized shelves, catalogs, and labeling systems. Databases do the same thing using data structures — special ways of organizing data so it can be stored and found efficiently.

At the heart of almost every database is an index — a separate data structure that acts like a library catalog. Instead of scanning every single row of data to find what you want, the database checks the index first, which points it directly to the right location. This makes reading data much faster, though it does add a small overhead to writing, since the index needs to be updated every time new data is added.

2. Hash Indexes

Imagine you have a locker room with hundreds of lockers. Instead of checking each locker one by one, you have a magical directory that says “Key 42 is in Locker 7B.” That magical directory is essentially a hash index.

A hash index uses a technique called hashing — it takes your data’s key (like a user ID or product name), runs it through a mathematical formula (a “hash function”), and converts it into a number. That number maps directly to a location in memory where the data is stored.

Pros: Blazing fast lookups. Finding a record is almost instantaneous — like having the exact locker number handed to you.

Cons: Hash indexes only work for exact matches. If you want to find all users whose name starts with “A”, a hash index won’t help — it can only tell you where “Alice” specifically is stored, not a range. They also struggle if all your data doesn’t fit in memory (RAM).

A real-world example is the storage engine used by Bitcask (a database storage format), which keeps all keys in memory as a hash map and stores values on disk.

3. SSTables and LSM-Trees

Now let’s level up. What if you’re dealing with millions of writes per second — like a social media platform recording every like, comment, and view? You need a smarter system.

SSTables (Sorted String Tables) are files where data is stored in sorted order by key. Think of them like a sorted dictionary — every entry is alphabetically ordered, so finding something is fast and efficient.

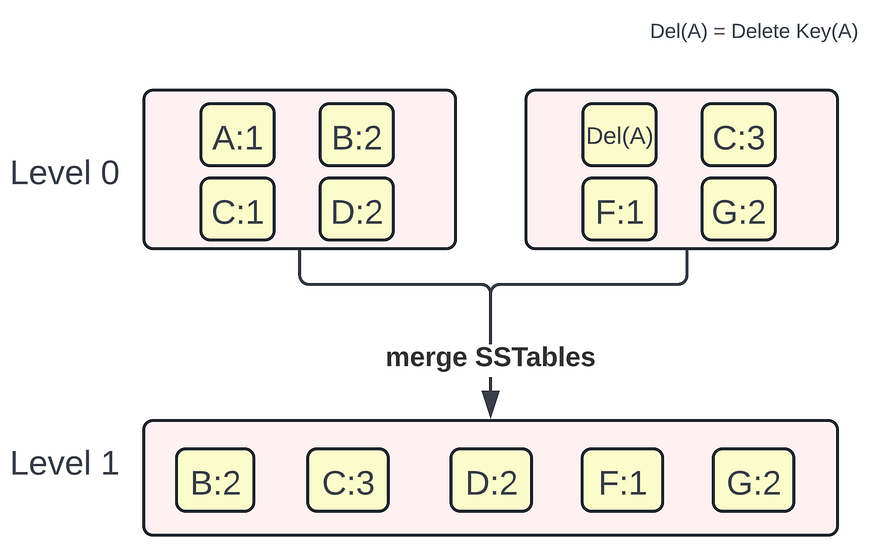

LSM-Trees (Log-Structured Merge-Trees) are a system that uses SSTables cleverly:

- When new data comes in, it’s first written to a small, fast in-memory structure called a memtable (think of it as a scratchpad).

- When the memtable gets full, it’s flushed to disk as an SSTable file.

- Periodically, multiple SSTables are merged together (called compaction) to keep things tidy and fast.

This design is excellent for write-heavy workloads. It’s used by popular databases like Cassandra, LevelDB, and RocksDB.

Analogy: Imagine a chef who jots orders on a notepad (memtable), then at the end of the shift, rewrites them neatly in a logbook (SSTable). Occasionally, old logbooks are reorganized and merged.

4. B-Trees

B-Trees are the most widely used indexing structure in traditional databases (like MySQL and PostgreSQL). If SSTables are like sorted filing cabinets, a B-Tree is like a perfectly organized hierarchical filing system — a tree where each node contains multiple keys and pointers to children.

Here’s a simple way to think about it: Imagine a company org chart, but instead of people, the nodes hold ranges of values. To find “Employee #5432,” you start at the top (the root), follow the pointer that says “4000–6000,” then narrow further, until you reach the exact record. Each step eliminates a huge chunk of the search space.

Key properties of B-Trees:

- Data is always kept sorted, making range queries (e.g., “find all orders between Jan and March”) very efficient.

- The tree always remains balanced, meaning every lookup takes roughly the same time regardless of how much data is stored.

- They work well on disk (not just memory), because each node typically corresponds to one disk page.

B-Trees are battle-tested and incredibly reliable, which is why they’ve been the backbone of relational databases for decades.

5. Comparing B-Trees and LSM-Trees

So which is better — B-Trees or LSM-Trees? The honest answer is: it depends on your use case.

Feature B-Trees LSM-Trees Write Speed Moderate Very fast Read Speed Very fast Moderate Storage Efficiency Moderate Better (compaction removes duplicates) Best for Read-heavy workloads Write-heavy workloads

B-Trees shine when you’re reading data frequently and writes are less common — think a banking system where you query account balances often but update them less.

LSM-Trees win when data is being written constantly — think logging systems, time-series data, or analytics pipelines where millions of events flood in every second.

In practice, both have quirks. B-Trees can suffer from fragmentation over time. LSM-Trees can have slower reads because they may need to check multiple SSTables before finding a value (though this is mitigated with filters like Bloom Filters).

6. Other Indexing Structures

Beyond B-Trees and hash indexes, there’s a whole zoo of specialized indexing structures designed for specific problems:

Multi-dimensional indexes handle data with multiple dimensions. Imagine searching for all restaurants within 5 km of you — that’s a 2D spatial search (latitude and longitude). Structures like R-Trees and k-d Trees are built exactly for this.

Full-text search indexes (like those used by Elasticsearch or the search bar in Google Docs) break text into individual words and index each word separately, allowing fast keyword searches across millions of documents.

In-memory indexes are used when the entire dataset fits in RAM, allowing extremely fast access — like Redis, a popular in-memory database used for caching.

The key takeaway: there’s no one-size-fits-all indexing solution. Engineers choose the right tool based on the access patterns of their application.

7. Transaction Processing or Analytics?

Databases serve two very different masters, and it’s important to understand the difference:

OLTP (Online Transaction Processing) — This is the day-to-day database work. When you buy something on Amazon, the database records your order, deducts stock, processes payment — all in real time. These operations are short, fast, and involve a small number of rows per query.

OLAP (Online Analytical Processing) — This is for business intelligence. Instead of one user’s order, an analyst might want to know: “What’s the total revenue from all orders in North America last quarter?” These queries scan millions of rows, aggregate data, and generate insights.

Running both on the same database is like having the same road used by delivery motorcycles (OLTP) and massive freight trucks (OLAP) — they interfere with each other. That’s why companies typically separate them.

8. Data Warehousing

A data warehouse is a separate database system designed specifically for analytical queries. Think of it as a “read-only reporting database” that gets a copy of data from all the production systems (your OLTP databases) through a process called ETL — Extract, Transform, Load.

- Extract: Pull data from various source systems (orders, users, payments, etc.)

- Transform: Clean it, standardize formats, and combine it

- Load: Insert the processed data into the warehouse

Popular data warehouses include Amazon Redshift, Google BigQuery, and Snowflake. Analysts and data scientists run complex queries here without touching the production database — keeping both systems healthy.

9. Stars and Snowflakes: Schemas for Analytics

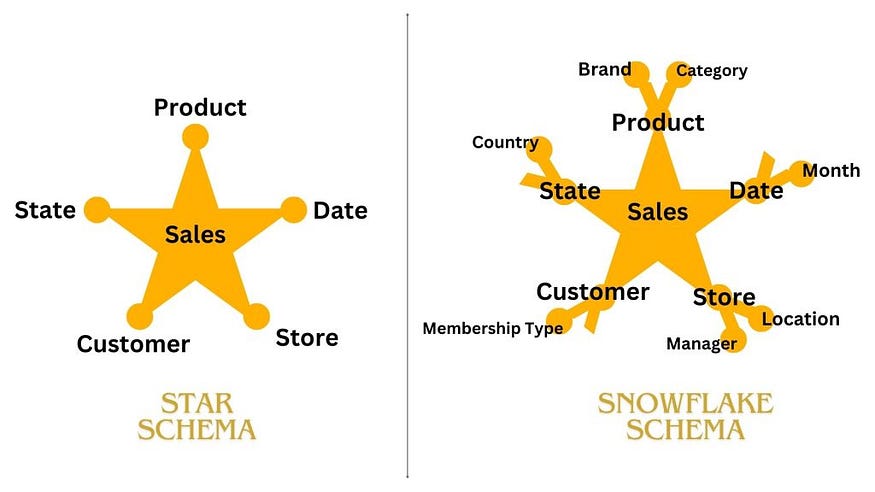

In a data warehouse, data is organized using special schemas optimized for analytical queries:

Star Schema — Imagine a star with a large “fact table” at the center (containing measurable events, like sales transactions) surrounded by “dimension tables” (like Date, Product, Customer, Store). The fact table connects to all dimensions, creating a star shape. This makes it easy and fast to query things like “Total sales by product category per month.”

Snowflake Schema — A variation of the star schema where the dimension tables are further broken down (normalized) into sub-dimensions. It looks like a snowflake because of the branching structure. It uses less storage but requires more table joins to answer a query.

For most analytics use cases, the star schema is preferred because of its simplicity and query speed, even if it stores some data redundantly.

10. Column-Oriented Storage

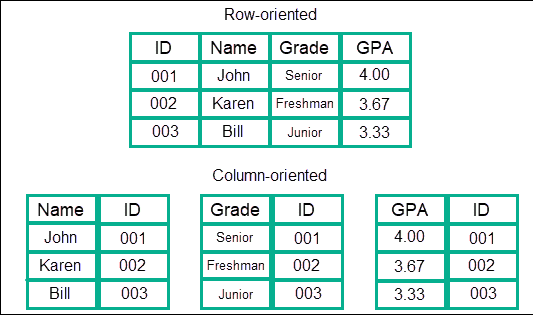

Traditional databases store data row by row — all columns for one record are stored together. This makes sense for OLTP (you often want all columns of a single record).

But for analytics, you usually want one column across millions of rows — like “give me all the sale prices from the last year.” In a row-oriented database, you’d have to load every full row just to extract one column — very wasteful.

Column-oriented storage flips this: it stores all values of one column together. So all sale prices are stored consecutively, all dates are stored consecutively, and so on. When your query only needs two or three columns, the database reads only those columns — skipping everything else.

This is a game-changer for analytics performance. Systems like Apache Parquet, Apache ORC, and Amazon Redshift use columnar storage.

11. Column Compression

Here’s a bonus that comes with column storage: data in a column tends to be repetitive. If you store the “country” column for a company operating in 5 countries, most values will repeat endlessly — “USA”, “USA”, “India”, “USA”, “Germany”…

Repetitive data compresses beautifully! A technique called Run-Length Encoding (RLE) can represent “USA” appearing 1,000 times in a row as simply “USA × 1000” instead of writing it out 1,000 times.

This means columnar databases not only read less data (only required columns) but also store that data in compressed form — using far less disk space and memory. Queries run faster, and storage costs drop significantly.

12. Sort Order in Column Storage

In column storage, you can choose to sort the rows by one or more columns. This might seem simple, but it has powerful effects.

For example, if you sort data by date, all January records cluster together on disk. A query asking “show me all January sales” can be answered by reading just one contiguous section of the file — instead of hunting across the entire dataset.

Sorting also helps compression further. If rows are sorted by country, then all “USA” entries appear together, making run-length encoding even more efficient.

Different queries benefit from different sort orders, which is why some systems (like Vertica) store multiple sorted copies of the same data — each optimized for different query patterns.

13. Writing to Column-Oriented Storage

There’s a catch with column storage: writes are harder. In a row-oriented database, inserting a new row is straightforward — just append a new record. But in a column-oriented store, you’d need to insert a new value into every column file — potentially touching hundreds of files on disk.

The solution is borrowed from LSM-Trees: writes go into an in-memory buffer first (fast and efficient), and periodically the buffer is merged with the on-disk columnar files in a batch operation. This way, the write path stays fast, and the column files remain well-organized for reads.

14. Aggregation: Data Cubes and Materialized Views

Analysts often run the same complex aggregations repeatedly — “Total revenue by region and product for each month.” Running this fresh every time is expensive.

Materialized Views are pre-computed query results stored on disk. Instead of recalculating from scratch every time, the database serves the cached result. The trade-off: the view must be refreshed whenever underlying data changes.

Data Cubes (also called OLAP Cubes) take this further. They pre-compute aggregations across all combinations of dimensions. Imagine a 3D cube where one axis is time, another is geography, and another is product. Every cell in the cube stores a pre-aggregated value (like total revenue). Queries against a data cube are incredibly fast — you’re just looking up a cell, not computing on the fly.

The downside: data cubes are rigid. You can only query along the dimensions that were pre-defined when the cube was built. Modern systems often prefer flexible materialized views over fixed cubes.

Summary

We’ve covered a lot of ground! Here’s the big picture:

Databases use clever data structures (B-Trees, hash indexes, SSTables, LSM-Trees) to make reads and writes fast. The right choice depends on whether you’re doing lots of writes or reads.

For analytics, databases are organized differently from everyday transactional databases — using data warehouses, star schemas, and column-oriented storage to handle massive scans efficiently.

Column compression and materialized views further optimize performance by reducing the amount of data read and pre-computing expensive aggregations.

Understanding these fundamentals gives you a mental model of what’s happening “under the hood” whenever you query a database — and it’s the foundation for making smart engineering decisions as you grow in your tech career. Happy learning!